Below you can see a couple of examples of the functions created to draw insights from the dataframe. At the same time, extracting data is what I enjoyed the most. Since almost all the data was nested in JSON libraries, the majority of my project’s time was spent iterating through feature engineering tasks to conjure variables I can use to train models with. Cleaning was tough.Ĭolumns to clean & wrangle: - subtype: filter out it's values from df, remove the original column\ - ts: changing it to datetime, remove miliseconds, get days of the week, months of the year, type of the day, parts of the day\ - user_profile: extract real_name in new column, remove the original\ - attachments: extract title, text, link in new columns\ - files: extract url_private and who shared\ - attachments: extract title, text, link in new columns\ - reactions: extract user, count, name of the emoji\ #frames = df = pd.concat(, ignore_index= True, join="outer")īy this time, my dataframe has 5263 lines and 13 columns with a bunch of unrelated data to my project. Then went on to merging each channel’s separate dataframes into a single one for convenience.

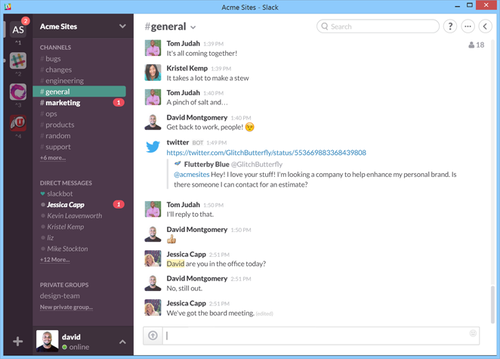

# defining file path path_to_json = './raw_data/general/' # get all json files from there json_pattern = os.path.join(path_to_json,'*.json') file_list = glob.glob(json_pattern) # an empty list to store the data frames dfs = for file in file_list: # read data frame from json file data = pd.read_json(file) # append the data frame to the list dfs.append(data) # concatenate all the data frames in the list channel_gen = pd.concat(dfs, ignore_index= True) # test channel_gen.tail(100) So I started off by loading each channel’s JSON files into a single dataframe. To represent the challenge I had with the number of JSON files, here is just the general channel’s folder containing all the conversations broken down by days, like this: detailed code, browse my GitHub repo here.visuals (done in Tableau) check out my presentation.Could have been done through the Slack API as well, but that was out of scope for my project.įor the sake of keeping this blog post concise, note that I focused on highlighting the exciting parts of the code, not the insights it gave. My dataset included public Slack conversations from the first day till the last at the Ironhack Bootcamp provided by the Slack admin. This was also the final, e2e project of my Bootcamp and I had subject matter experts at hand as stakeholders (my lead teacher and my project mentor) to direct me towards a value-driven product. I had the data, all I had to do is draft the questions I want to answer and do it. Since a considerable amount of my career was already done remotely (pre-covid times, actually!), I found these questions sparkling excitement and joy in my brain hungry for creative solutions. Remote environments give very little chance for teachers and leaders to gain feedback and optimize their content towards better performance. Ever wondered how engaging was the content you delivered? Was it clear or confusing? Or if people misunderstood your message at that company-wide meeting?